Composite AI systems : Real-world application within Private Equity

Authors : David Branch, Deployment Managing Director and Akhil Dhir, Principal Data Scientist at WovenLight.

Unless you’ve been under a rock you’ll have witnessed that 2023 was a pivotal year in AI, exemplified by global fascination with Large Language Models (LLMs), which showcased their capabilities in tasks such as translation and coding through simple prompts. This interest initially placed LLMs at the forefront of AI application development, igniting curiosity about their fast-evolving capabilities.

However, the landscape is shifting. AI use is ripe for strategic advancement to address some existing challenges and maximize the benefit it can bring to the value creation process.

In the world of private equity, the utility of Large Language Models (LLMs) is increasingly coming under scrutiny as real-world performance issues, particularly their propensity to yield credible sounding yet inaccurate results is becoming well-known. This concern becomes especially pertinent in the context of due diligence and portfolio value creation. While the ‘creativity’ of LLMs might be valued in the origination phase, where innovative thinking and broad scanning of possibilities are crucial, it poses a significant challenge during the more rigorous stages of investment.

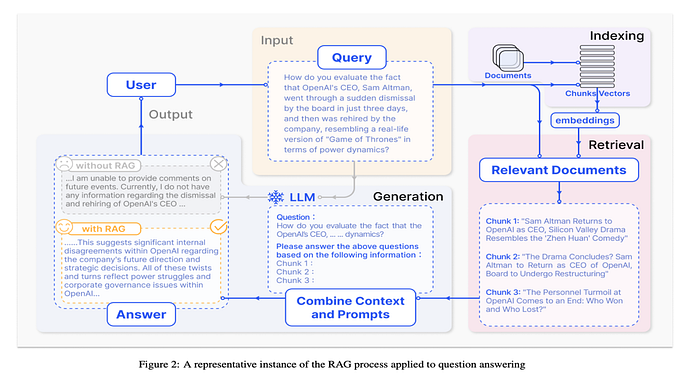

The primary focus in due diligence is obtaining precise and reliable information about potential investments. Deal teams have access to high-quality, often proprietary data, which could never be part of an LLM’s ‘organic’ knowledge base. Consequently, there is a crucial need for a system that can process, store, retrieve, and utilize this data effectively in conjunction with an LLM that has been trained on open source data.

The reliability of LLMs further deteriorates due to model drift or decay. As these models are continuously updated with new data and as the business landscape evolves, their outputs may become less accurate or relevant. This drift can result in misinformed decisions, particularly problematic in a sector like private equity where adapting to current market conditions is essential.

To counter these challenges, it’s essential to recognize the limitations of LLMs and complement their use with more robust, data-driven AI tools and frequent model updates.

A composite AI system merges traditional software or models with generative AI models. At its core, an LLM agent functions as a system orchestrator. It can control Retrieval-Augmented Generation (RAG) pipelines and call external tools, thus accessing a broad range of data sources — public, private, and proprietary.

These systems can not only replicate but also augment the analytical processes typical of a private equity analyst. They introduce a new level of sophistication to data processing, ensuring a more nuanced, comprehensive analysis and decision-making capacity, vital in the dynamic private equity landscape.

The case for Composite AI in PE

In the realm of private equity, composite AI systems stand in stark contrast to general-purpose models like ChatGPT.

ChatGPT excels at processing vast datasets swiftly. Deal-sourcing, for example, which has historicaly been a labour-intensive task is now being transformed by GenAI tools. However, limitations soon surface in the precision and depth required for tasks like due diligence and portfolio value creation.

Composite AI, on the other hand, is adept at integrating and analyzing multiple data sources in various formats, crucial for a comprehensive understanding of a target company. This is valuable in the intricate process of consolidating, comparing, and contrasting extensive document sets enabling the breaking down of complex questions into simpler queries and the synthesis of insights for informed decision-making.

This sophistication is essential in private equity, where the iterative process of analysis and reassessment is crucial, especially as data evolves and investment strategies require continual fine-tuning.

Deploying AI as a composite system offers a more robust approach that reflects real-world nuances and constraints. This focus on operational workflow is required for private equity firms to build repeatable capabilities that capture the real value from AI.

How Composite AI works

Composite AI’s role extends far beyond data processing and trend analysis; it is about creating an interconnected framework that can leverage multiple AI methodologies to mirror the complex decision-making processes of a PE analyst.

Such sophistication cannot be achieved purely through a simple chat interface; it often demands a network of AI agents and tools that work in concert to navigate the vastness of public, private, and proprietary data. The intricate interplay of these systems allows for a level of analysis and insight generation that is unattainable through single, isolated AI models.

Crafting an effective solution in this domain is not straightforward. It requires not just a single, one-size-fits-all approach, but rather a suite of broad strategic templates. These templates need to accommodate interchangeable components, such as different LLMs (like GPT-4 or Claude 2), vector stores and execution strategies (retrieval and/or generation), while maintaining the overall functionality and performance of the system. There are a very large numbers of possible combinations of tools to build into the composite system.

For example, even when constructing a RAG, there are multiple retriever and language models to choose from. The designer of a composite AI system needs to balance many varied considerations of cost, compatibility, and functional/ non-functional component performance.

As an example, Medprompt is a Composite AI system from Microsoft that generates up to 11 solutions to medical questions by combining ChatGPT-4 with LLM generated chain-of-thought examples and multiple external samples.

Deepmind have developed AlphaCode 2 that uses heavily optimised LLMs designed to operate on code related-questions and combined with clustering models. This composite approach has achieved an estimated rank within the top 54% of participants in programming competitions by solving new problems that require a combination of critical thinking, logic, algorithms, coding, and natural language understanding

Inevitably, the increased complexity of a composite AI system means that design, deployment and optimisation of these systems is more complex and slower than a straight forward LLM. It may be that various components need to be optimised as a whole, rather than done individually before being combined. In some of the most complex composite AI systems, another AI tool may be used to assist with the optimisation of the components. Also, while the overall performance of the system should be measurable, identifying how individual components are performing is difficult and not always meaningful when not considered outside the context of the wider system.

There are also very large numbers of possible tools to build into the composite system. For example, even when constructing a RAG, there are multiple retriever and language models to choose from. The designer of a composite AI system needs to balance many varied considerations of cost, compatibility and functional/ non-functional component performance.

Benefits of using Composite AI

Composite AI systems are of particular use within the private equity ecosystem for three reasons:

1. Value for money and time to value — the cost of compute remains high, even when using cloud infrastructure. Whilst there is a well understood correlation between computing power and the performance of LLMs, the ‘law of diminishing returns’ is clearly in evidence here and getting the required level of performance from an LLM may only be possible with very significant further investment. It is often more cost-effective to develop a composite system to achieve the same result rather than increasing the computing power to scale an existing LLM.

For example, if a model were to be run and the success of the output measured and fed back into the model, it is sometimes possible to get an existing LLM to improve its performance significantly beyond that of an off-the-shelf LLM, without any need to buy additional computing power. Additionally, techniques such as this can be significantly faster than scaling an existing model.

Interestingly in a recent Microsoft project, it was discovered that the prompting methods used (in this case for a medical diagnosis use case) to train the LLM, appeared to be valuable, without any domain-specific updates to the prompting strategy across professional competency exams in various other areas such as electrical engineering, machine learning, philosophy, accounting, law, and psychology. In other words, the act of training the LLM in a specific domain brought performance benefits when using the model for entirely different domains. This potentially offers a compelling route for PE firms to deploy at scale across their portfolios.

2. Provenance tracking and trust — a deep learning solution is inherently unpredictable and may produce results that need to be over-ridden or ignored for purposes for compliance, legality of customer perception. A composite AI system can help solve this issue by, for instance combining an LLM with a rules engine that filters out or flags some types of result or embeds provenance tracking so that specific outputs can be fact-checked from their source if needed.

3. Configuration for multiple user bases- as the deployment of LLMs grows, there will be increasing issues of the input to models needing to be managed carefully to avoid confidential information being released in the output. For instance, when an LLM is employed to monitor an organization’s entire email archive for compliance, the system’s design must ensure that sensitive data, such as HR communications, is not inadvertently revealed in the process. The configuration complexities intensify when considering the deployment across different jurisdictions, each with its unique regulatory demands concerning data privacy and security.

In such an environment, it is not merely a matter of user configuration; it is about architecting a system that can intelligently discern and manage multiple data sources while adhering to stringent security protocols. Composite AI systems provide a strategic advantage here, with their advanced engineering allowing for granular control over data accessibility and user permissions.

Emerging trends with Composite AI

We have identified the following trends all emerging in the field of composite AI systems:

- Quality Optimisation — To build Composite AI systems you need to break the problem into steps, fine-tune your prompts for each step, adjust how these steps interact, create and use synthetic examples for tuning, and then fine-tune smaller LLMs to save cost. This process can get complicated quickly, especially if you have to change any part of your system, your LLM, or your data, which might require you to redo your prompts or fine-tuning. Luckily tools are emerging to make working with LLMs in complex systems easier and more efficient, for example, DSPy helps simplify this process by separating your system’s structure from the details like LLM prompts and weights. It also introduces special algorithms that help adjust these prompts and weights automatically to achieve better results.

- Cost Optimisation — Choosing the right AI model from a vast selection for specific applications can be daunting, especially when working to a limited budget. FrugalGPT (for example) offers a solution by intelligently directing inputs to various AI models to ensure the best quality within a set budget. It learns from a few examples to develop a strategy that either boosts performance by up to 4% for the same cost or slashes expenses by up to 90% without compromising quality. FrugalGPT illustrates a growing trend of AI gateways or routers, which enhance the efficiency of AI applications. These gateways are most effective in systems where tasks are divided into smaller, manageable parts, allowing for optimized routing for each segment.

- Monitoring Operational Performance — When AI for real-world use-cases monitoring the performance of the model and the associated data flow is essential for reliability. This is amplified for Composite AI systems which often involve multiple steps, each of which require tracking of its action and subsequent outcomes. To achieve this we see tools like LangSmith, Phoenix Traces, and Databricks Inference Tables providing valuable advanced tracking and analysis, sometimes linking results to data pipeline quality and impact on final outcomes. The research efforts like DSPy Assertions that aim to use monitoring data to enhance AI performance directly are worth keeping an eye on.

It’s not a pure LLM world

As many companies strive to benefit from the performance uplifts that AI can bring, many will need to be smarter about how they implement different tools as doing so can deliver vastly superior results in a more cost-effective way than trying to implement and configure a single tool. Moreover, in the fast-moving world of AI development, new enhanced tools for various use cases will emerge frequently and will need to be added to existing workflows.

Composite AI installations will therefore become the norm and successful businesses will be those best able to implement and manage them.